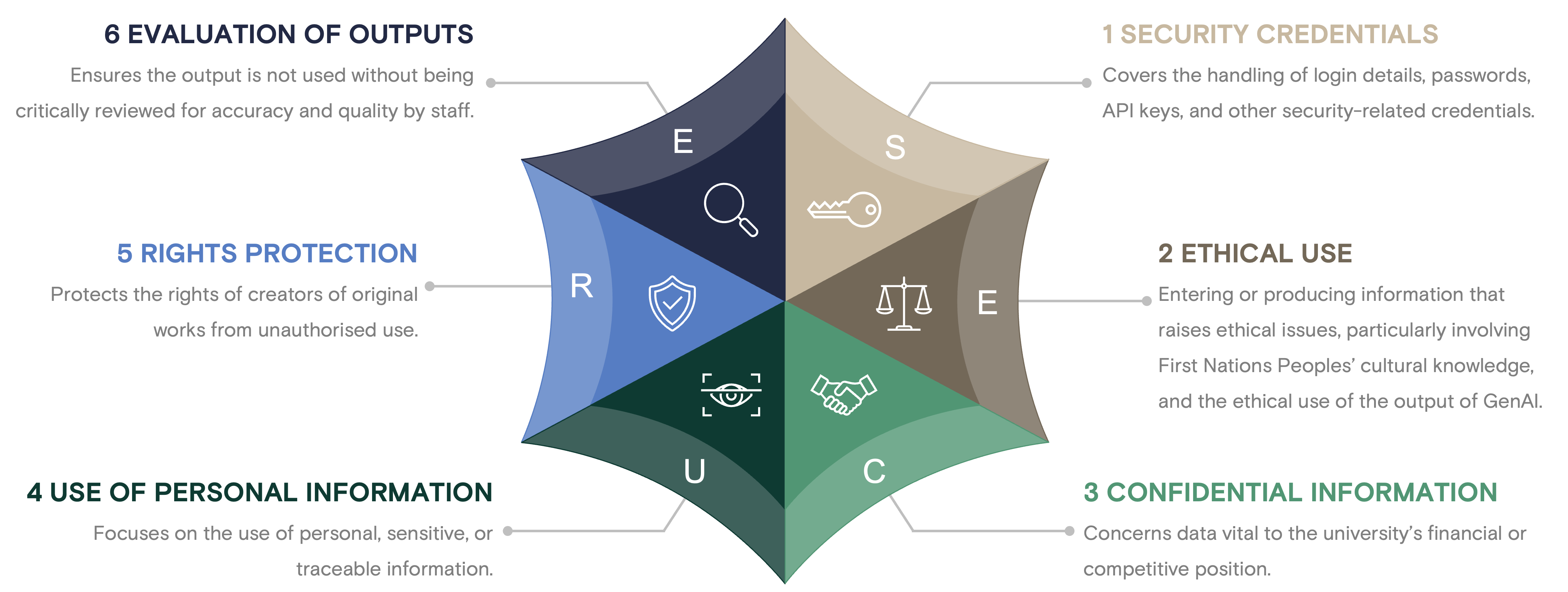

The S.E.C.U.R.E. GenAI Use Framework for Staff is designed to guide university staff in making informed decisions about using GenAI software.

The S.E.C.U.R.E. framework provides staff with clear, practical guidance for the responsible use of GenAI tools within defined risk parameters without requiring explicit University approval for low-risk activities.

It organises GenAI-related risks into six categories:

Making a decision using S.E.C.U.R.E.

If staff answer ‘No’ to all six questions below, they are free to use GenAI as proposed. Note that S.E.C.U.R.E. should not replace existing oversight/approval mechanisms.

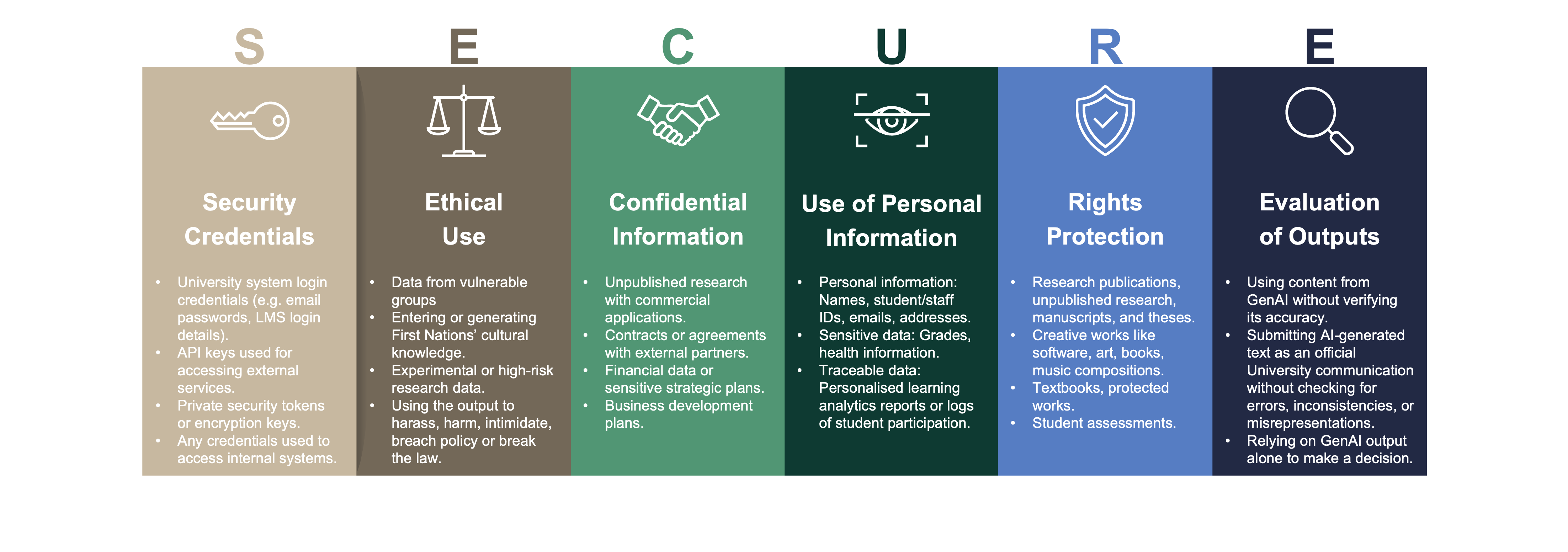

Security Credentials

Are you entering information such as login credentials, passwords, API keys, or any other sensitive security-related data that could compromise the security of university systems or personal accounts if exposed?

Ethical Use

Are you entering or generating information that may raise ethical concerns, involving vulnerable populations, or First Nations’ cultural knowledge? Will you use the output to breach University policy, break the law, or harm an individual, group or organisation?

Confidential Information

Are you entering information which, if disclosed, could cause harm to the university, especially if involving external partnerships or proprietary information?

Use of Personal Information

Are you entering information that directly or indirectly identifies a person, contains personal information (as defined by the Privacy and Personal Information Protection Act 1998), or is considered Highly Confidential, Confidential, Private or is traceable to an individual?

Rights Protection

Are you entering original works or creations protected by copyright, trademark, or patent law, including materials for teaching or research, or proprietary information that is only available to current staff or students at the University without permission?

Evaluation of Outputs

Are you using the output of GenAI without evaluating it to ensure it is accurate, reliable and unbiased, or using it in isolation to make decisions directly affecting individuals?

Risk category examples

Awards

WorldWIDE EdTech Awards 2026

AI Safety Tool of the Year

Association for Tertiary Education Management (AMTEM) 2025 Excellence Awards High Commendation - Governance, Risk and Policy

Testimonials

"A standout example of responsible leadership on one of the sector’s most pressing issues." Association for Tertiary Education Management (AMTEM) 2025 Excellence Awards judges

"[The] S.E.C.U.R.E. GenAI Use Framework for Staff is one of the most robust examples of the type of thinking we should model working with the AI tools we have on our campus. It thoughtfully walks through a risk-observant mindset.” Marc Watkins, Assistant Director of Academic Innovation at University of Mississippi

"A new practical framework providing easily understood and applied lessons helping academic staff work out which AI tools to use has been gifted to the sector." Tim Winkler, Future Campus

"A really simple, very elegant checklist." Claire Field, Principal at Claire Field & Associates

"This is such a valuable resource — a clear, sector-informed overview of generative AI in higher education." Dr Prue Laidlaw, Sub Dean, Learning and Teaching | Deputy Chair, Academic Senate, Charles Sturt University

"A well considered, helpful framework!" Bridget Pearce, Pedagogical Coach and Senior English Teacher, Brisbane Grammar School

"A simple way to think about responsible GenAI use - without slowing down low-risk work." Sam Burrett, AI Lead at MinterEllison

"The S.E.C.U.R.E framework... outlines key considerations one should reflect on when engaging in dialogue with (or prompting) language models." Claes Weise Schiermer Mørkeberg, Senior consultant at University College Copenhagen

"[S.E.C.U.R.E.] provides sensible, practical guidance through which staff can minimise risk without being impeded by overly rigid decision processes that may hamper innovation in low risk use cases." Sam Doherty, Education and Innovation Coordinator, University of Newcastle

"Using GenAI at work? Start with these six questions." Albert Suryadi, Principal Consultant, Altis Consulting

"The SECURE framework nails the balance between innovation and responsibility." Kevin Neary, CEO at Orcawise

"This is a clear, actionable starting point for any AI governance, compliance, or risk management strategy. It’s also lightweight enough to support fast-paced, low-risk AI experimentation." Joseph V Thomas, former Manager Director at Accenture

Alignment

The S.E.C.U.R.E. framework supports responsible AI adoption in Higher Education by aligning with institutional policies, government regulations, professional codes, and industry standards.

Voluntary AI Safety Standard

The Voluntary AI Safety Standard offers practical guidance for Australian organisations on the safe and responsible use and development of artificial intelligence. Read more...

Universities 2024 Financial Audit Report

The Audit Office of NSW recently published the Universities 2024 Financial Audit report, which includes findings and recommendations on AI. Read more...

Higher Education Standards Framework (HESF)

These Standards represent the minimum acceptable requirements for the provision of Higher Education in or from Australia by higher education providers registered under the TEQSA Act. Read more...

UNESCO's AI Competency Framework for Teachers

The UNESCO AI Competency Framework for Teachers sets out the knowledge, skills and values teachers require to navigate the use of AI in education. Read more...

Jisc Principles for the use of AI in FE colleges

Jisc (UK), in partnership with the Association of Colleges, established six principles in 2023 to guide the responsible use of artificial intelligence in further education colleges. Read more...

Download

S.E.C.U.R.E. GenAI Use Framework for Staff © 2025 Mark A. Bassett, Charles Sturt University Licensed under CC BY-NC-SA 4.0.